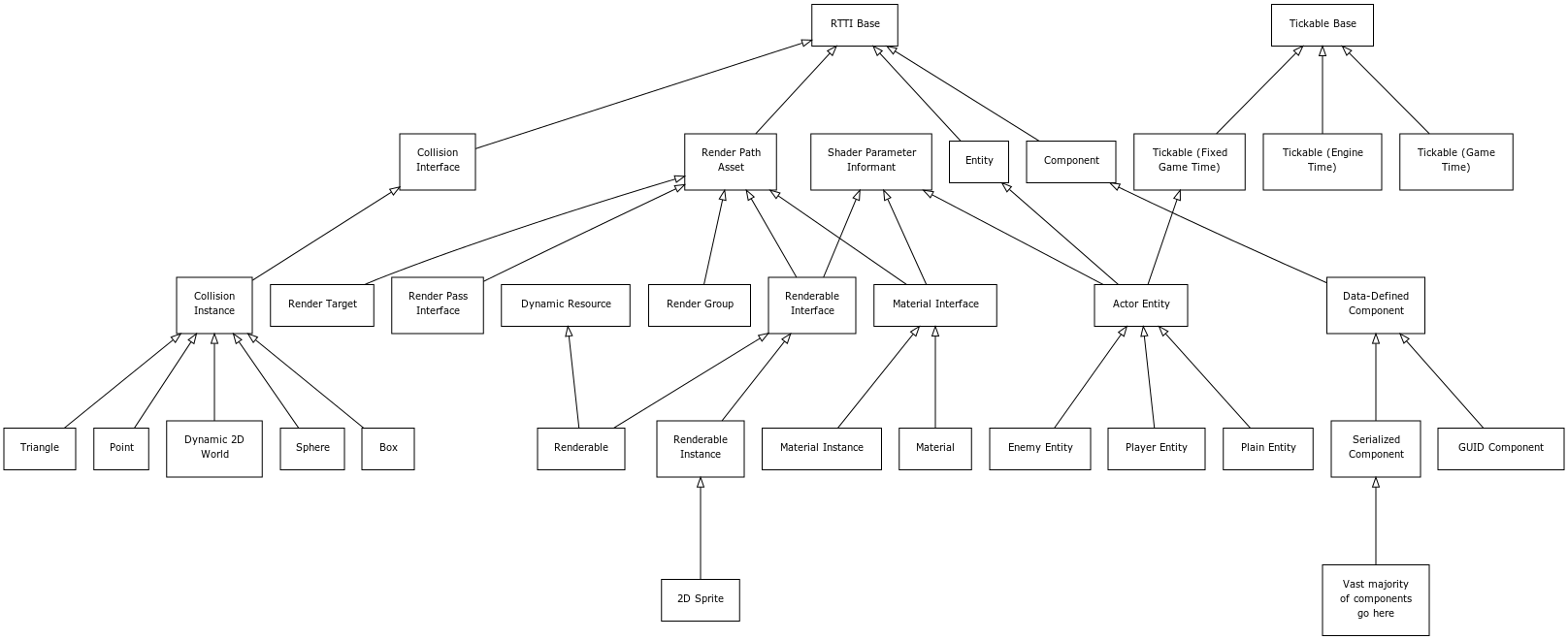

After the epic that was last week’s entity-component refactoring recap, I figured I’d do something a little shorter this week. It’s been a while (literal years, in fact) since I’ve drawn up a rough UML diagram of my engine’s most-used classes, and I was curious to see what it would look like now.

This is by no means a complete tree; many entity and component subclasses have been left off, as well as the vast majority of tickables, a handful of “render path” classes, and probably some others. This also does not indicate important relationships among classes, such as the template/instance pattern used by both the renderable and material classes. But I feel like this provides a decent 10,000 foot view of how my engine is structured in part.

The “RTTI Base” class (short for runtime type information) is the fundamental class from which anything that needs to be dynamically cast at runtime is derived. I don’t use C++ RTTI, but it is on occasion useful to have this information for certain types of objects (especially gameplay-related stuff like entities and components), so I can opt in to this functionality when it’s needed. As I’ve discussed handles in previous blogs, it’s worth noting that every RTTI object gets a handle, which can be used essentially like a smart pointer.

“Shader Parameter Informants” are classes which are capable of setting values for shader parameters (or “uniforms”) at render time. Whether they actually get a chance to set values depends on a number of factors beyond the scope of this post, although that would certainly be a fun topic to cover in its own post in the future. In short, a shader can provide some information in metadata specifying how it expects each of its parameters to be filled out, and the code attempts to reduce the frequency of shader parameter updates by sorting like elements together, so they may be rendered with successive draw calls without changing parameters in between.

A recent addition to this graph is the “render path asset” node and the “render pass interface” derived from it. This is related to a feature that I’d been dreaming up for a while, prototyped on Gunmetal Arcadia Zero, and have continued refining for Gunmetal Arcadia. Prior to these games and the introduction of this system, I’d always defined my render path in code. A typical path might look something like this:

- Clear the depth buffer and/or the color buffer

- Render game objects

- Apply game-specific fullscreen postprocess effects

- Render the HUD

- Render the menu and console if visible

- Apply any remaining fullscreen postprocess effects

In code, I would have to instantiate render targets for each step (where appropriate), match up postprocess shaders to sample from and draw to the correct targets, and so on. This tended to be wordy code, and worse, it tended to be error prone, especially once I started having to deal with toggling various effects off and on at runtime, causing the render path to change. (The CRT sim is a prime example of this.)

Now, I still have the option to define and modify these elements in code if I absolutely need to, but a vast majority of the render path setup and maintenance can be automated through the use of some relatively simple XML markup.

Even saving the shader parameter stuff for another day, there’s many more paths of this hierarchy I could elaborate on, such as the relationship between the collision interface and the transform component (not pictured) which is responsible for informing the collision system of the location of a particular collision primitive. But any one of those would be another long ramble, and I wanted to keep this blog post as high-level as possible, so I’ll wrap it up here.