So, this week’s blog and video are gonna be a little weird. I spent most of the week doing console port work, and the nature of that sort of work is that it’s confidential, which necessarily puts it at odds with my usual transparency and makes it difficult to blog about.

However, since the work closely mirrors the efforts I’ve made in the past to port my engine to Mac and Linux, I should be able to write in general terms about my porting process without divulging any of the specifics of what I’m doing. Bear in mind too that this is investigative work, and I’m not announcing or promising ports of any game to any platform at this time.

My first step when approaching any new platform is to get a minimal test program running on it, a “Hello World” sort of thing that proves I can compile and execute code on the device. Most of the sample code I have access to is a little on the bloated side compared to what I was looking for. Imagine an application that renders a 3D, spinning “Hello World” with bloom and antialiasing when all you want to do is printf(“Hello World”); that’s sort of the scenario I was in. Those samples did serve the purpose of verifying that the tool chain was working correctly, but they would have been impractical as a base on which to do my own development. Eventually I managed to pare down a small sample to what I felt was a more representative minimum, and from there, I began introducing my own engine code.

This is where things get a little trickier. I’m suddenly going from a few lines of “Hello World” code to tens of thousands of lines of code written over nearly a decade. It’s not going to just work. But having gone through the Mac and Linux ports already, I had a better understanding of where to start and what to expect.

The first goal is to get the code to compile by any means necessary. Once it compiles, the second goal is to get it to run without crashing. Once it’s running, the third goal is to bring subsystems (graphics, audio, input, file handling, etc.) online one at a time until it’s playable.

So I start by making it compile. Normally this means stripping out lots of code that the compiler doesn’t like. Often this is platform-specific or API-specific code that will need to be rewritten for the new target. Depending on the scenario, it may be best to separate code into #ifdef blocks for each platform. (I’m currently working in a branch and simply commenting out or deleting code with the intent to deal with merging these changes back into the trunk at some later time if this port proves successful.) Most of the time, this code is in the subsystems mentioned above, and in removing it, I’m effectively removing the ability to draw anything, play any sounds, recognize any input, and so on — everything that makes a game interactive. These will have to be rebuilt in the future.

There’s usual some amount of futzing with project settings before everything will compile nicely. This varies with platform, compiler, and IDE, and in my experience has been a huge wildcard. This new platform hasn’t given me too much trouble in this regard, but I’m also not entirely convinced I have all my projects set up correctly despite being able to compile and execute code. It’s something I’ll have to keep an eye on as I move forward.

So, once I have a build compiling and running, the next step is to see where it crashes and fix it. Historically, file handling issues are often among the first culprits, for instance because a path is incorrect and a content package file can’t be opened for read or a config file opened for write. Indeed, that was one of the first problems I encountered this time as well, and although I’m not certain my solution is shippable (in fact, I’m nearly certain it’s not), I did manage to get some initial file reads and writes working as expected.

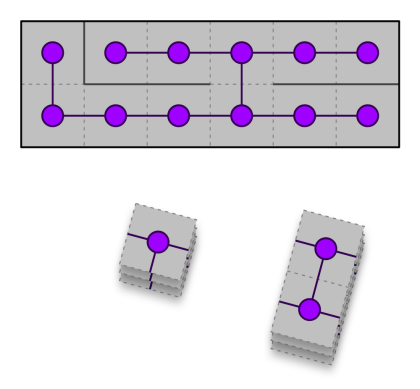

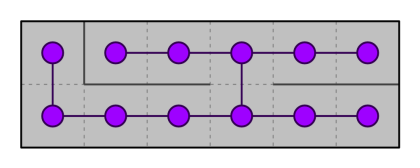

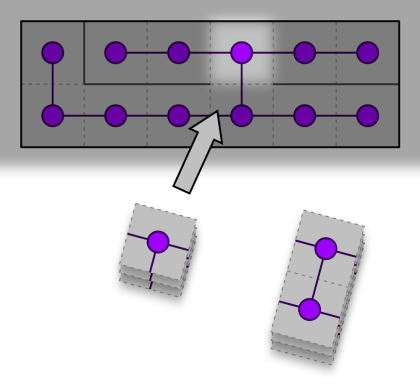

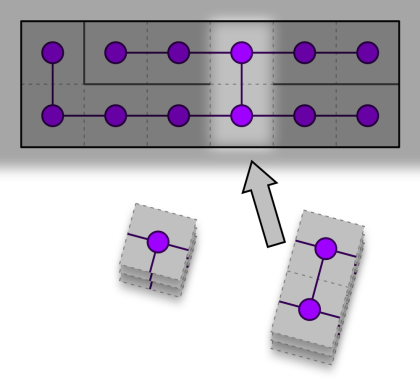

The next initialization crash happened while trying to construct renderable assets. Since I stripped out all my Direct3D 9 and OpenGL code, my renderer is currently returning NULL pointers in response to asset creation requests, and dereferencing these is causing a crash. So the next step, and the one I’m currently in the process of, is to stub out a good-enough renderer implementation to at least get a majority of cases handled. Having written side by side implementations of D3D9 and GL, I’m in a pretty good place to know what to expect, but nevertheless, this new platform’s API is one I haven’t used before, and there will be a bit of a learning curve there as I wrap my head around how this API wants to work and reconcile that with how my code has historically wanted to work.

So that’s where I am now, knee deep in renderer refactoring, with audio, input, and more looming over the horizon. All things considered, this has been a fairly smooth experience so far, compared to both my previous porting experiences to Mac and Linux and to my previous console development experiences in AAA. There’s been a surprising amount of things just working when I expected to encounter roadblocks. Hopefully that trend will continue as I venture further into this port.