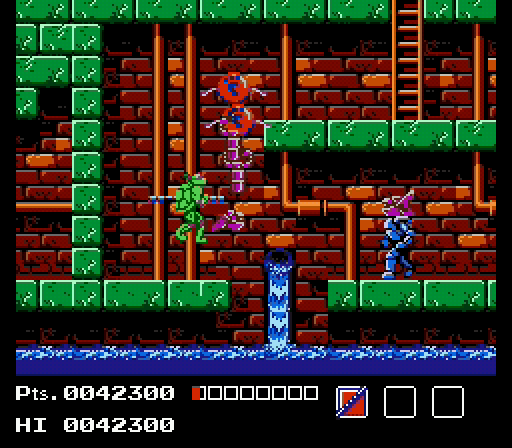

Even with two shipped games under its belt, my entity-component system (ECS) is still one of the youngest features of my engine, and it should come as no surprise that I’ve discussed changes to it many times throughout the development of the Gunmetal Arcadia titles.

On the schedule today: ENGINE REFACTORING!! :D

Rewriting entity creation to support multiple definition markup files as input.

— J. Kyle Pittman (@PirateHearts) July 7, 2016

A few weeks back, just before I resumed my normal weekly blogging schedule, I tweeted about some ECS refactoring I was doing to better support dynamically modifying enemies and other entities at creation time. Then just last week, I made yet another change to my entity serialization, so I’ll be covering both of those today.

Entity creation from multiple definitions

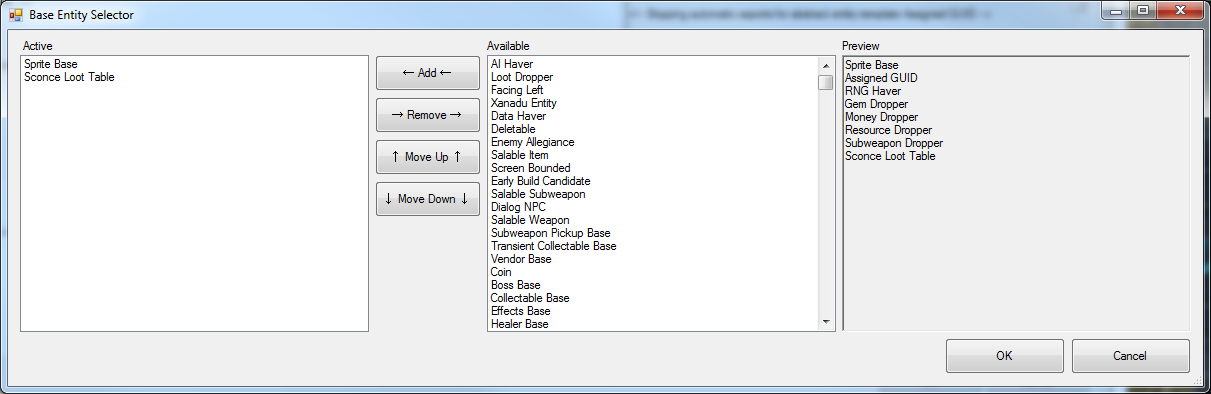

Last August, I added a feature to my editor to allow me to split entity definition markup across multiple hierarchical templates, giving birth to a paradigm that I compared, perhaps inadvisably, to multiple inheritance.

This solved a problem of having a large amount of copied and pasted markup across entities that were similar in some ways but which couldn’t be easily generalized to a single common ancestor.

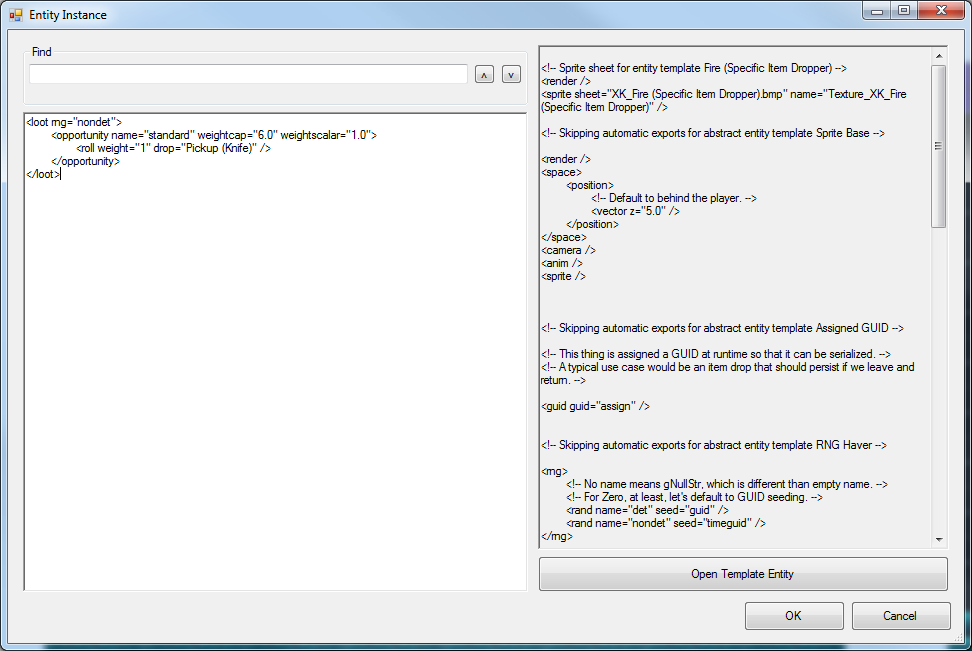

This proved to be a huge help in shipping Gunmetal Arcadia Zero, but I also relied on the ability to add markup to specific instances of entities which had been placed in levels. I often used this to flag certain enemies as being counted towards challenge room locking and unlocking, or to spawn rewards when bosses died, or other situation-dependent events. It would not have been appropriate to create a new entity template for each of these since each one would only be instantiated once; keeping that markup associated with the instance that needed it was and is the most logical option.

Unfortunately, the procedurally generated nature of Gunmetal Arcadia precludes the use of instance-specific markup. With a small number of exceptions, entities are not placed in the editor at all, but are instead spawned at runtime at known-safe locations given information about spawn “opportunities.” As a result, I was forced to find an alternative that would play nice with procedural generation.

My solution was to extend the concept of aggregating multiple data definitions sources from the editor to the game. Historically, every entity has been spawned from a single definition file containing all the markup it would ever need. The notions of an entity hierarchy and instance-specific markup were strictly editor conceits; all this data would eventually get concatenated together into a single file for each and every entity.

As of a recent change, I can now specify an arbitrarily large list of data definition files from which to build an entity, rather than just one. This is conceptually similar to concatenating markup into a single file in the editor, but rather than iterating through markup that was assembled offline, I spin through all the markup in one file, then proceed to the next, and continue until all files have been exhausted. The end result is identical, provided the source is otherwise the same aside from being split across multiple files.

In this way, I’ve been able to solve the problem of marking up entities based on situation, with my first use case being to count the number of living enemies for locking and unlocking challenge rooms, as I mentioned briefly in Episode 40. I took the same instance-specific markup that I used in Gunmetal Arcadia Zero for flagging enemies as counted for challenge rooms, and I moved it to a new “mixin” entity template. Then I simply need to augment the list of entity definitions with this one when spawning enemies within challenge rooms, and they automatically get counted without the need to create challenge-room-specific variations of every single enemy type.

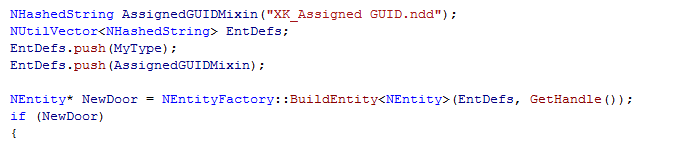

Since solving that first use case, I also found a use for this when working on spawning doors between the level hub and subrooms. Historically, doors have been assigned a GUID by virtue of being placed in the editor. Entities require a GUID to be serialized (saved and loaded to disk, but also saved to memory to be recalled during the same session), so if we want to preserve the state of a locked or unlocked door, we need one. Now that they are spawned dynamically, they require a dynamically assigned GUID. Now, I could have edited the primary door template in the editor and added some markup to accomplish this, in the assumption that all doors in this game will be dynamically spawned and will all require a dynamically assigned GUID.

However, in light of this new tool, it feels more correctly to split the markup for dynamically assigning a GUID out into its own “mixin” template that can be applied at runtime. In this way, I can preserve the way doors have worked in the past in case I ever need to rely on that behavior again. I like this too because it feels truer; there’s nothing intrinsically “dynamically spawned” about the concept of a door, so putting that markup in the door template feels kludgey. Splitting it out into its own thing that can augment a door, effectively turning it into a different kind of door, that makes more sense.

Additional component serialization

Continuing with the development of doors, I ran into a couple of unusual problems (not pictured below) in which entering the same door multiple times could, depending on the circumstance,

- Drop the player in front of a different door in the same room

- Drop the player in the middle of the screen rather than a door

- Crash the game

At least one of these turned out to be a fairly boring bug caused by failure to correctly account for the position of the door within a large scrolling room. But the more interesting issue, and the one I’m going to talk about here, dealt with some assumptions I’ve made in the past regarding data definition markup and serialized entity-component state.

In the past, I’ve typically assumed that the data definition markup I provide when constructing an entity represents its whole. Regardless of whether that markup is contained within a single file or split across multiple, it has always represented the complete, fully constructed state of the entity and all its components. Recently, I broke that assumption.

The situation was this: when placing usable doors on either side of a hub/subroom transition, each needs to know about the other in order to specify a destination. In the past, this has been handled by the editor. I mark one door as “Side A,” the other as “Side B,” and the editor automatically adds some markup to each letting it know the other exists and identifying itself to the other. But once again, I can’t rely on editor tools, as these are now spawned dynamically at runtime.

Now, I might not have the actual doors available to me in the editor, but what I do have, as I’ve mentioned before, are entities representing known-safe spawn points. I have a set of these for enemies, and I have another set for doors. And my solution to this particular problem was to add a new component to the door spawn points called a “door proxy.” This component is responsible for making the link between the two doors on either side of the transition; by virtue of the fact that it doesn’t get constructed until the level has been procedurally generated, it can trust that that information will be available. In fact, it is this proxy component that is responsible for spawning the door itself, which may be an invisible bounding box that prompts for the “up” input, or may be a visible locked door requiring a key to open.

So the proxy spawns the actual door, and it also maintains the information on how the two sides of the door are connected. But there’s a missing piece, and that’s how the door knows that it needs to utilize this information. I’ve accomplished that with the use of yet another new component, a “teleport helper.” This component exists on the door itself and looks for incoming information from a door proxy. If found, it does essentially exactly what the editor tool did in Zero: it assigns a unique name to this door, and it sets up information for teleporting to the other door, which is assumed to have been assigned a name in the same fashion and can therefore be located when necessary.

That’s all well and good, and entering a door constructed in this manner works correctly. The trouble starts when we go back the other way. Rather than returning through the door we came from, we spawn in the middle of the room (default fallback behavior when no appropriate spawn point can be found), and if we enter it a second time, the game crashes.

The problem stems from what the teleport helper component does. It assigns a name to the entity (via a name component) and provides information about its destination (via a teleport component, which is different from a teleport helper component). That in itself is fine, except that the data definition markup for the door does not explicitly specify either of these components; they are created at runtime as they are needed.

I mentioned at the start of this latter wall of text that I’ve historically assumed the data definition markup represented the whole of an entity, and as I’ll explain, that meant specifically that no additional components were expected to be added after the entity were constructed from its definition.

My ECS code has no qualms about adding components post-factory-build. In fact, in both You Have to Win the Game and Super Win the Game, I frequently added components by hand in code, as the notion of a complete data definition for the player entity in particular simply didn’t exist at the time. So that wasn’t a problem. And my serialization code didn’t care if new components had been added when it went to save the entity to disk; it would spin through whatever components were present and write out all their data.

The problem arose when loading this serialized data back up, as in the case of returning to the first room. After spawning the door, the spawn point with the proxy component deletes itself, as it assumes the door is capable of sustaining itself after creation (and with the assigned GUID discussed in the first half of this post, it should be). But the way serialization works in my engine is, the entity first gets rebuilt from its data definition file(s), and then the serialized data (which is assumed to be a delta from this default state) gets applied a component at a time. And here’s the catch that’s taken me far too many paragraphs to reach: if a particular component is not specified in the data definition file(s), it will not be constructed; if it’s not constructed, it will not attempt to load previously serialized data.

Functional doors require a name and a teleport destination, but specify neither component in their data definition markup, relying instead on the teleport helper component to construct these. Therefore, when reloading a door that was previously serialized, it will be missing these components, and the serial data for them — which does exist — is simply ignored.

My solution was simple enough: when rebuilding an entity from serial data, I now spin through that data, constructing any missing components for which serial data exists. In this manner, the name component and teleport component for the door will be constructed when their previously saved data is found, despite not be explicitly specified in the data definition markup for a door.

In truth, there was a much simpler fix for this issue, which would have been to stub out placeholder data definition markup for these two components to ensure their creation. This would have been all of two XML tags:

<name /> <teleport />

With that, the entity factory would know to construct those components. It wouldn’t set them up with any defaults, leaving that to the teleport helper, but it would guarantee they existed prior to loading serial data.

So why didn’t I do that? Mostly it came down to the fact that my code has always allowed for components to be added after factory construction, and even though I almost never do that, and a workaround did exist in this case, it felt better to continue to support that path.

This does raise an interesting question though. If I support adding new components at runtime, should I also support removing them? Historically, I’ve never removed components from an entity, ever, and I can’t even imagine what sort of use case would necessitate that behavior, but there is a sort of irritating asymmetry in supporting one but not the other.

Oh well.