It’s the week of Christmas, I’m one more month into my mandatory vacation time, and it’s looking like it’ll still be a little while longer before I’m back to full speed. But there is finally a light at the end of the tunnel. I’ll get to that in a bit, but first, let’s take a look at what I’ve been up to since my last update…

Inspired by this NESDev forum post on forests in NES games, I started pixeling a tileset for the forest levels in Gunmetal Arcadia. I’ve known for a while there would be forest levels in these games — it’s where you’ll meet one of the main NPCs in Zero who will later become a playable character in Gunmetal Arcadia — and it was a personal goal of mine to ensure they looked better than the ones in Super Win. I’m happy to have finally pulled that off. I still have a bit of work left to do on the leaves, as they still look a little too flat and repetitive, but I’m happy with where it’s going.

I accidentally fell down a rabbit hole of renderer refactoring last week. It started when I was testing Gunmetal Arcadia Zero in the “WinNFML” configuration, a Windows build which uses OpenGL and SDL in place of DirectX. That configuration serves as a quick check to make sure the Mac and Linux builds aren’t going to be very obviously broken before I take the time to switch to those platforms, update, and rebuild.

So I run the game, and…something is very obviously wrong. Pixels in the game scene seem to be bleeding into adjacent pixels in ways they shouldn’t. I poke around in the options a bit and realize the “Pixel Sharpness” setting isn’t doing what it’s supposed to. Doesn’t take me long to realize it’s doing linear interpolation instead of nearest neighbor / point sampling. But why? I go look at the shader source, and…well, there you go. I have two samplers, both referencing the same texture; one does linear interpolation, the other nearest neighbor. And I immediately understand the problem.

OpenGL and GLSL don’t make the distinction between textures and samplers the way DirectX effects do. In GL-land, there are only samplers, each corresponding to a texture unit, and they can be updated in native code to point to whatever image data is desired. DirectX effects extend this concept by automatically associating a sampler and its state (interpolation and indexing methods) with a texture, which represents the image data. Any number of samplers may point to the same texture and may sample it in different ways. This allows the C++ code to care only about the texture and the HLSL code to care only about the sampler. The link between them is defined once upfront and never has to change.

DirectX effects provide many of these sorts of helpful features, and I’ve had to recreate them in my OpenGL implementation. It turns out that when I initially wrote my HLSL-to-GLSL converter two years ago, I made these assumption that textures and samplers would be always one-to-one, as that was how I had historically always used them. It wasn’t until just recently that I’d had cause to decouple them, and my GL implementation broke as a result.

Once I understood the nature of the problem, the fix was fairly straightforward. Thankfully, as it took place entirely in my own wrapper code designed to make OpenGL and GLSL behave more like Direct3D, it didn’t involve any actual painful GL coding, just some rewiring of metadata and refactoring of code that I had written myself and knew completely.

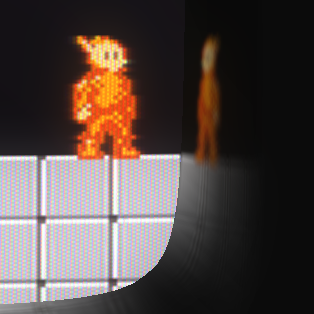

It’s one of those minor things that I forced myself to ignore for a long time, but the reflections on the CRT screen in Super Win the Game just never sat well with me. They were too skewed out in odd directions, too far from what I see when I look at my reference CRT screen. So I finally made an attempt to fix that in Gunmetal Arcadia. There’s still a little bit of skewing due to the FOV of the perspective camera, but I’ve significantly flattened these out so they should appear more natural and correct.

Having just had my hands in rendering code, I thought it would be a good time to attempt the longstanding task of breaking down my primary render loop into a number of smaller, more digestible functions.

Going back years and years (over seven years now, which seems like an impossibly long time), I designed my renderer to automatically look for places where it could minimize how often shaders and render states needed to change. This was done by defining my entire render path as multiple “passes” (admittedly a poor choice of naming considering its use in shader languages), within which a number of “groups” could be selected for drawing. A pass might be, for instance, “draw all in-world objects,” and its corresponding groups would be “in-world objects with/without alpha.” I define these upfront when the game launches, and each frame, I spin through them, in order, and render each item in each group. Within each group, ordering is not guaranteed, as the group gets an opportunity to sort itself for optimal rendering by arranging items that share a shader next to each other so that the renderer doesn’t have to naively set the shader each time. I take this a step further by minimizing how frequently shader parameters change as well, given some metadata specifying whether the parameter needs to change on a per-object or per-material basis. Things like world-view-projection matrices typically must be set for each individual object, while things like textures can be set once for the material and reused for each object with that material.

Anyway, long recap over, the point is that the code that iterated through these passes and groups and objects and materials was one enormous six-hundred-line function, with bits and pieces ifdef’d out for Direct3D and OpenGL builds. Bad in theory, bad in practice, just a mess any time I had to touch it. My goal was to break out the inner parts of each loop into its own function for clarity and ease of maintenance. Seemed like a pretty easy task, and it was, right up until I decided not to stop there and instead also totally redesign how the render path is defined.

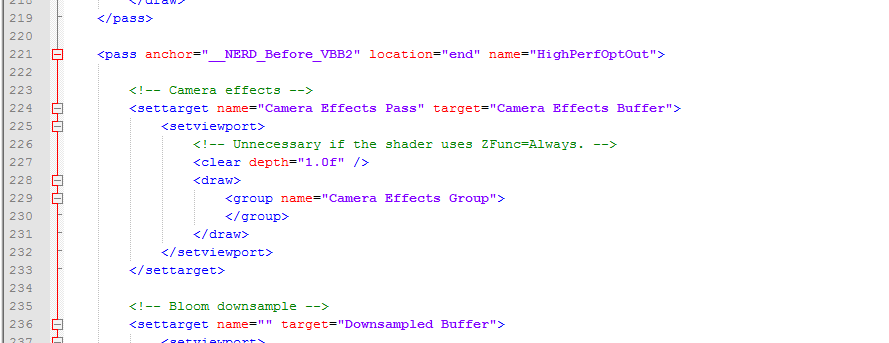

I mentioned that I define passes and groups upfront when the game launches. To be clear, I mean that for the last few years, for every game I’ve made, I’ve hardcoded these passes and groups into the native C++ code. As my rendering paths have grown in complexity, and as I’ve started to move towards defining more and more aspects of my games in data, this has become a problem. What I really want, what I’ve wanted for a while, is to be able to define some markup (XML, as is my preference) and translate this into the render path. And ultimately, that is exactly what I’ve ended up with, but it took me a while to get there.

Once of the fundamental shifts in design was to move away from a strictly linear sequence of passes and groups to a nested tree-like format corresponding to a state stack maintained within the renderer. In the past, each pass would necessarily have to define which render target it wanted to draw to. Now, a pass may optionally choose to specify a render target, and any nested subpasses maintain that state unless they specify their own. The same is done for viewports. Whenever the renderer is finished iterating through the members of a pass that altered the render target or viewport, it pops that data off its stack, restores the previous state, and continues. (In practice, I also reduce redundant render target setting by only committing these changes whenever I’m about to draw something or clear the current target.)

Finally (yes, there’s more), I also killed off a number of old features that had sat dormant for years and were threatening to complicate this change. This included support for multiple render targets (used once in an old deferred rendering demo, never ported to OpenGL) as well as a few separate options for preserving the state of render targets across device resets.

Ever since Super Win, David and I have been in an escalating arms race to develop a cooler Minor Key Games intro splash animation. Here’s the new one I’ve cooked up for Gunmetal Arcadia. This draws from my interest in ’80s laser grids and also hearkens back to the art style of my 2008 indie debut Arc Aether Anomalies.

I mentioned that I’ve been moving towards defining more content in markup, and the render path refactoring is only the first of many steps I hope to take as I bring my engine up to speed with this methodology. My game initialization code is also fairly heavy with definitions for things like configurable variables, controls and default bindings, console commands, and renderable assets, including things like quads used for drawing fullscreen effects.

I took a first step towards generalizing configurable variables over the weekend by refactoring my config system to maintain the state of all variables internally. In the past, the registration process has involved associating a named config variable (e.g., “VSync”) with a pointer to a piece of data in some object somewhere (bool bVSync). These variables would typically live in the core game object, which persists throughout the entire duration of the game, so the pointers would never risk being invalidated.

With this change, I’ve removed all those member variables. These are now maintained internal to the config system and are strictly accessed through that system. This wasn’t a particularly difficult change, but it did involve touching a lot of code, as I have over one hundred config variables at this point.

The next step will be to define these variables and their default values in markup. In order to do this, I’ll have to solve the problem of how to represent function pointers in markup (used for callbacks in response to config variables changing) and then match these up to functions at runtime. I’m not yet sure how this will work, short of a simple name-to-function-pointer mapping, as I’ve done in the past for automating the process of creating entities and components from markup. I’d like to find a better, more generalized way to do this, something more along the lines of tagging functions with a keyword or wrapping them in a macro that automatically associates them with a name, but I’m not yet exactly sure what that would look like. If I did have a system like that, it would also be useful in generalizing a number of other data-definable assets, particularly console commands.

I’ve been working on new tunes whenever I have the time, as I do. I’m pretty happy with how these two have turned out, and I’m excited that I’m starting to develop a coherent sound for the Gunmetal Arcadia ‘verse. Listen to these and watch that GIF up above of Vireo walking through the forest and you’ll get a sense of the gameplay experience…minus the gameplay.

In testing my recent engine changes on Mac and Linux, I ran into an edge case in my build process that I hadn’t seen before. I always rebuild my content packages in Windows (as that’s where my tools live), commit the output to my SVN repository, and sync it on Mac and Linux.

The bug presented itself as: I launch the game on Linux, and nothing draws. The whole screen is black. I can hear sounds, so I know it’s running, but I don’t see anything. After a little bit of debugging, it appears that my auto-generated GLSL code isn’t in the correct format; it doesn’t have the recent texture/sampler decoupling features I’d added. I’m thinking maybe it’s a problem with timestamps. The tool should only rebuild content if the source file has been changed more recently than the destination, so maybe it skipped these, or maybe I simply forgot to rebuild and version content packages before testing this on Linux. So it’s back to Windows, rebuild and commit content, back to Linux, still doesn’t work. Debug a bit more, still coming up with the same thing.

Finally it occurs to me to validate the GLSL output being produced by my converter in Windows before it’s packaged up, and sure enough, it’s not right. But if a full clean and rebuild didn’t produce the correct output…

Double facepalm, instantly know what’s wrong. Yes, I was cleaning and rebuilding the GLSL shaders, but I was using an outdated version of my converter tool to do so because I had made changes to the tool on my desktop and now I was on my laptop, and my build process doesn’t automatically pull down new versions of my command line tools.

In retrospect, it’s such a simple and obvious problem that I’m amazed I hadn’t run into it before, but I guess historically I just haven’t done much content rebuilding on my laptop, so it had never become an issue.

In any case, this prompted me to refactor my build process a bit to help ensure that the most up-to-date versions of all tools are always available and are only maintained in one location. Previously, I had had various versions of a few command line tools checked in at different locations throughout my repository due to legacy usage, which was another source of occasional trouble.

Anyway, the Mac and Linux builds are working fine now, so yay.

Looking to the immediate future, Super Win the Game will be on sale again imminently, so now would be a great time to pick it up if you haven’t already!

I’m planning — fingers crossed — to return to a normal weekly blogging and video schedule the week of January 11. That’s three weeks from today. I’ll be kicking off 2016 with a top-to-bottom refresher of what these games are and my goals for each. It’ll have been fifteen months since I initially announced Gunmetal Arcadia and five since I announced Gunmetal Arcadia Zero, so I’m probably due for a bit of a recap.

Anyway, I’m gonna go watch Hans Gruber fall off Nakatomi Plaza now. Happy holidays! See y’all next year!