The hardware limitations of the NES necessitated certain aspects of its games’ visual aesthetics. Sprites and backgrounds were both composed of 8×8 tiles; in action platformers, these were nearly universally grouped into 16×16 blocks. In conjunction with the console’s limited color palette, this led to a recognizable uniformity across its library. Additionally, certain artistic conventions arose throughout the NES’s life cycle, among them the notion of placing drop shadows beneath platforms in action games to provide clarity of spatial positioning and a greater sense of depth.

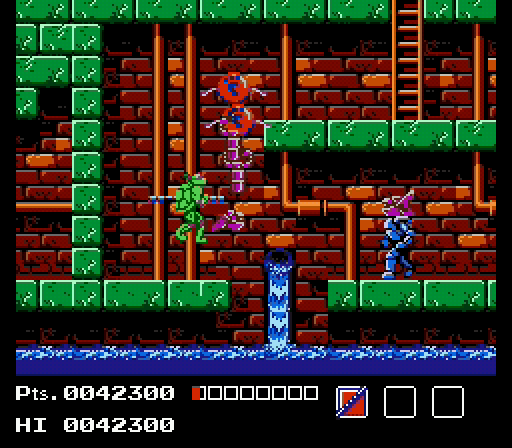

As I’ve noted before, Faxanadu was one of my primary points of reference in developing the look of Gunmetal Arcadia, and drop shadows were an important part of this. The exact nature of these shadows vary from tile set to tile set, but going back to the earliest implementation of the catacombs set, shadows have been an integral part of the look.

In Gunmetal Arcadia Zero, these shadows were all placed by hand. For the roguelike Gunmetal Arcadia, that is not an option. The quarter-scale prefabs that compose the world don’t (and shouldn’t) have knowledge of their neighbors, so drawing shadows that extend correctly across prefab boundaries cannot be done reliably without introducing severe restrictions to how prefabs can be drawn.

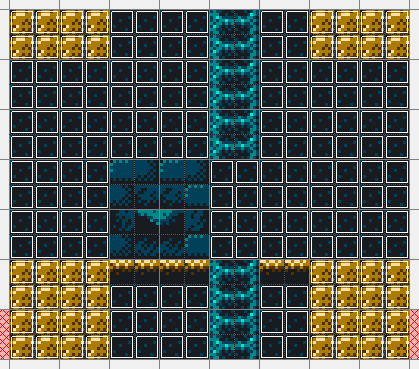

My solution has been to procedurally generate background tiles based on the collision type (solid, walkable, ladder, etc.) of adjacent tiles. After generating a level using the “card-laying” algorithm I discussed previously, I go back through each room and, on a tile-by-tile basis, see whether I need to substitute in a replacement.

In my editor, tiles that need this substitution are implemented as “custom tiles,” which is a sort of catch-all feature that allows me to associate some XML metadata with a tile to provide clues to the game as to how it should function. Currently these only containing stubs flagging them as walkable background tiles or solid collidable walls and floor, but in the future, these will likely be extended to include specific replacement rules.

In code, I look at the collision type of adjacent tiles in certain positions relative to the current one, and based on these, I choose substitutions. Since shadows drop down and to the right, I have to search up and to the left for occluding tiles. The exact substitution I choose depends on which tiles are occluders and how far they are from the current tile.

While prototyping this scheme for background tiles, it became apparent it could be useful to apply a similar approach to solid tiles as well. I’ve typically assembled patterns like these overlapping bricks from smaller sets of general purpose tiles, as shown below. Just like the shadows, it would be impossible to match these up perfectly at the edges of prefabs if I were to draw these by hand, so what I’ve done instead is to choose substitutes in this overlapping brick pattern based on location, and to apply the appropriate edges when adjacent tiles are non-solid, so bricks don’t get cut off halfway.

These prototypes are strictly tied to this particular tile set, but understanding how to solve these problems for one set will put me in a good place to understand how to general these sorts of rules to any set. In particular, I’m thinking about the tiles for the third and fifth levels of Gunmetal Arcadia Zero and how they have a top layer of grass. These rules will undoubtedly be similar to the ones I’m using for detecting edges of bricks in the catacombs set, but I expect there will be some additional edge cases to consider based on my experiences with handpainting these tiles.

I talked a little bit in Tuesday’s video about some input remapping changes I’ve been making recently in order to better support a wider number of gamepads. This feature grew out of a two-hour development stream I did last week and consumed the majority of my week, but it also clued me in to some existing problems in my input system that I otherwise wouldn’t have found.

This solves a problem that I’ve known about for years but had never attempted to address before. The problem is, there’s no consistent standards or conventions for the assignment of buttons and axes on DirectInput devices. I’ve historically followed Logitech’s conventions, as their gamepads seem to be among the most popular non-XInput devices, but not all devices adhere to these same rules. In particular, I’ve had trouble with NES- and Super NES-like gamepads, as well as DualShock 2 gamepads running through USB adapters. The face buttons are often assigned differently; for instance, the bottom face button, the one that would be labeled “A” on an Xbox controller or “X” on a PlayStation one, will be identified as “Button 2” on some devices and “Button 3” on others. This creates two problems. The first is in assigning default control bindings. Let’s say I assign Button 2 to the “Jump” control. This will work correctly on Logitech devices and some others, requiring the player to press the bottom face button to jump, but on other devices, “Button 2” might be the right face button (B or Circle by Xbox or PlayStation standards), so the player would have to press that button to jump by default. Of course, I support arbitrary control bindings, so this can be corrected, but that’s only part of the issue. The second problem is that the button glyphs displayed by the game are coupled with the input (Button 2, Button 3, etc.), so even if you rebind the correct button in terms of physical placement, the game might display the wrong glyph for that button. (For instance, if the player were using a device for which the bottom face button were Button 3, the game would show “B” or “Circle” as the glyph for that button, when it would be appropriate to show “A” or “X.”)

This problem is easiest to understand in terms of face buttons, but it extends to axes and POV hats, as well. The naming of “POV hats” dates back to their origins on flight sticks, but nearly every gamepad I’ve ever tested reports the d-pad as a POV hat, so that is how they are most commonly used today. Axis naming is even more bizarre; DirectInput supports 24 axes, named for X, Y, and Z; position, velocity, acceleration, and force; translation and rotation. Typically the left analog stick of a gamepad will be mapped to XY/position/translation, but the right analog stick and sometimes triggers (if reported as axes rather than buttons) are less standardized.

The DualShock 4 is my favorite controller of the current generation and I want to support it as well as possible, but it brings a number of additional concerns to the table. Chief among these is that its triggers are reported as both buttons and axes. This creates problems when awaiting user input to bind a control. My control binding code watches for changes to any input on any device; when it sees a change, it assigns that input to the specified control. Because the triggers activate two inputs at once, it will only catch the first one it sees, which will often, but not always be the axis rather than the button because it checks for this one first. However, it the trigger is only slightly depressed, such that it is still within the dead zone, the axis will be ignored and the button will be selected instead. My solution for this, against my better judgement, was to introduce a device-specific hack to simply mute the button inputs associated with the DS4 triggers when that device is used (as detected by vendor and product ID).

Additionally, the DualShock 4’s trigger axes rest at the minimum position (-32768) rather than the conventional center (zero). On an ordinary axis, I can watch for deviations from zero in either direction to see whether the input is being pushed, and in which direction. That code fails for these triggers, because changes across the zero boundary will happen both as they’re being pressed and released.

It’s conceivable that my code is simply naive and should account for an arbitrary resting position. However, I would argue that axes are intended to rest at zero and that this is an unusual outlier, as evidenced by the fact that DirectInput has no mechanism for reporting axes’ resting values, and in fact, this exact issue led Microsoft to combine the Xbox 360 controller’s triggers into a single axis that does rest at zero (with the unfortunate and surely intended side effect that the triggers cannot be reliably polled with DirectInput at all, and XInput must be used instead).

My initial solution was to cache off the first values reported by polling the device immediately after it was initialized or selected within the game menu. This value (clamped to the nearest of zero, -32768, or 32767) would be treated as the resting value. This would introduce problems of its own when using flight sticks with dials that don’t automatically center themselves, but until I make a flight sim, I think that’s a risk I can take.

However, that plan fell apart on account of getting unpredictable results back when polling a device immediately after it were acquired by DirectInput. Sometimes it would return the expected values; other times it would return a completely zeroed out state. As this state could conceivably be a valid result for some devices, I couldn’t assume it had failed and needed to be retried on the next tick, and with no error or warning result I could catch, this plan seemed to have hit a wall. So, I added another hardcoded hack, forcing the resting values of these axes to the expected values when the DualShock4 vendor and product IDs were seen.

These sorts of major changes to established systems always make me a little skittish, but so far the results have been promising. I have a little more testing to do on Mac and Linux builds, but both my DirectX and OpenGL/SDL Windows builds are behaving correctly for all my dozen or so test devices, so that’s reassuring.